Getting Started With Reverb Design, Part 1: Dev Environments

You can design reverb algorithms

Over the years, I’ve received many questions from people who want to try their hand at developing reverb algorithms. In many cases, people have qualified their questions with “I’m not an EE, but…” Well, as an Anthropology major, I can attest that you can design reverbs without having to go through an intensive 4-year academic program!

Set up a development environment

In my opinion, all the theory in the world is useless without being able to HEAR the results. So my suggestion is to start your reverb development path by setting up one or more development environments. Ideally, you want a place where you can hear your work in near-real-time, and run the audio of your choice through your algorithms.

Already know how to code? Use Juce!

Great! My recommendation is to download the Juce SDK. Juce is the framework used by many plugin developers to create their plugins. It is used to handle both the audio and visual parts of the plugins, has target for all of the popular plugin formats (AU, VST2, VST3, AAX, Mac, Windows, Linux, iOS, Android), and is free and open-source to get started.

Once you get a plugin you want to release commercially, Juce has several options to pay for using the code for closed-source plugins. I would highly recommend installing the Juce SDK, compiling the example plugins, and modifying the example plugins as the start to your own plugins.

Don’t know how to code? Check out a computer music language.

My suggestion is to work with a computer music language/environment, so you can start learning the fundamentals of digital signal processing and algorithms without having to understand code. When I started programming reverbs in 1998, I was using Csound. This was an old school language even in 1998, but it had all the building blocks I needed to make reverbs:

- Delay lines. A “delay line” allows you to read from and write to a memory buffer and increment through that buffer every sample. The distance between your read and write points determines how much the signal is delayed from the input to the output. An algorithmic reverb will have several to several dozen of these, all at different delay lengths.

- Filters. A digital filter is used to control the high and/or low-frequency balance of a signal. In a digital reverb, filters are often placed in the feedback path of a delay, where the signal is filtered, scaled by a value, and added back into the input.

- Modulators. Many digital reverbs (including all of the Valhalla reverbs) will slowly vary the delay lengths over time. This requires fractional delays that use linear or higher-order interpolation, but that’s a concept beyond this introduction. The important point is that you need some sort of modulator to vary things slowly. These are often low-frequency oscillators or some sort of band-limited noise.

- Add/subtract/multiply. These are the building blocks for creating feedback around the delays, as well as feeding a signal around a delay (as found in allpass delays), scaling the outputs of the reverb, and creating matrices for mixing signals together before they are fed back into the inputs, stuff like that.

Today, you have many real-time options to experiment with these fundamental computer music building blocks. Most modern music DSP environments use a visual environment to patch signal processing modules together in a similar way to an analog modular synthesizer.

- Pure Data is a free, open-source real-time computer music environment that runs on pretty much everything – Windows, Mac, Linux, embedded hardware, you name it. It isn’t the prettiest language from a visual standpoint, but it is very powerful. For reverb development, you’ll want to use of the Pd-Extended branches, and find something that has an allpass~ unit generator, as this is a CRITICAL building block for algorithmic reverbs.

- Max/MSP is a commercial, closed source, prettier version of Pure Data. It has nice delay and allpass ugens built in. More importantly, it has several decades of useful example code.

- Max4Live is a fairly recent adaptation of Max/MSP that runs as audio and MIDI effects inside of Ableton Live. It is a VERY powerful environment to get started in, especially if you use Live as part of your musical workflow. I started working with Max4Live in early 2020, to create some examples for lectures at the University of Victoria in Victoria, BC. It took me about a week to get up and running. By the time I gave the lectures, I was able to develop a few algorithms that became the core of ValhallaSupermassive. You can get a LOT done in Max4Live.

- Bitwig Studio was recently updated with the Grid environment, which has 170+ DSP blocks that can be patched together in a visual environment. I haven’t tried this yet, but I’ve heard other people having success patching together reverbs with the allpass delays.

- Reaktor has had some nice reverb examples for the last few decades, so it is worth downloading the various reverbs in that environment and opening them up to see how they work.

Want to learn how to code? Focus on C++.

Most of my DSP code is in C++, although it is closer to “C with classes” – I tend not to use advanced C++ functionality in my low-level DSP blocks. C++ is used for Juce, and for many other audio SDKs, so learning C++ will be super useful.

Working in a visual DSP language can be much faster than getting up and running versus a text-based DSP language. It is much quicker to prototype a simple reverb in Max/MSP or Max4Live versus creating an entire DSP and GUI code base in C++.

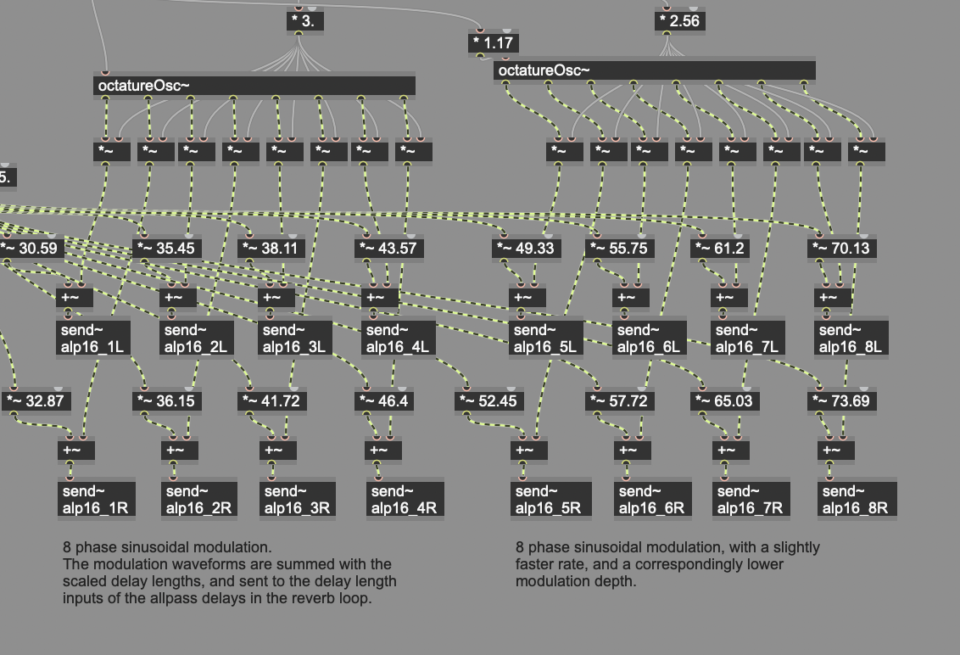

The drawback of a visual language is that a complicated reverb will quickly turn into something that looks like a pile of necklaces that have become knotted together – it is difficult to follow. Text bases languages usually have the benefit of having for() loops or something similar to churn through repetitive actions, which is a lot of what reverbs end up doing. Still, if you are just getting started, I’d highly recommend trying one of the visual languages, and seeing how far it can take you.

In my next blog post, I’ll list what I consider to be the “canonical” papers in reverb literature. Thanks for reading!

Thanks, Sean! I’m just getting started using Juce. Even for someone like me who has written code in various languages professionally for years, the DSP stuff can be a bit difficult to grok. Your work is an inspiration. Thanks for sharing your knowledge as well.

Hi Sean,

this is incredible. I was thinking about this topic a lot, downloaded the VST SDK years ago but have been scared away by the sheer complexity of the VST 3 standard. IPlug2 or JUCE was in the pot and DSP stuff was the most scary part. Thanks a lot for sharing your knowlegde !

I approached Max4Live as a 25+ year C++ software developer. And I really didn’t like it.

The basics are laid out in the documentation, but when I had two days to implement four Live automation functions and only managed half, my experience was a bummer. You go online to the forums and there’s a question similar to yours and then Bam! here’s an answer with no commentary about how it works. No comments in the code whatsoever. The original asker: “thanks awesome man!”

I’ve used LabVIEW, another graphical programming language mainly geared for electronics systems measurements, for 16 years. It’s documentation is much better and I can generally solve my problems. But I don’t like it either. The problem with these languages is that beyond a certain level of complexity there are no good strategies for managing it or documenting it. So one mind figures out how to do something brilliant, but cannot convey how it works to another in most cases (or even to himself a couple years later.)

I cannot comprehend the Max code you excerpted above. It has been a year and a half since I tried in earnest, so its fair that I shouldn’t be able to without going back to the docs. But maybe 50%? 30%? C/C++/Python have a tremendous advantage here.

In general, I think graphical programming languages aren’t great for reverb programming. Reverbs tend to have a ton of repetitive elements in series and/or parallel, and these turn into a plate of spaghetti in visual formats. A nice for() loop is so much easier for dealing with series & parallel elements.

Max4Live wasn’t suggested for its programming elegance. It was suggested because it is right there, in the most popular DAW that our customers work with (Ableton Live). It takes a while to get things going in Max4Live, but once you have stuff working, you have an object that can be used in all your Live projects, complete with GUI. Having real-time audio going through your algorithm, and the ability to tweak parameters in real time, is far more important than programming elegance when learning audio DSP in my experience.

The Max code I posted was posted in order to have an image for the blog post. You should see the full algorithm – it is nightmarishly complicated in visual form. And super elegant in C++ for ValhallaSupermassive (but not a particularly great visual).

Wow, I’m only finding this series now. Thank you for sharing. When I began dabbling in programming for sound using ChucK and Pure Data, I didn’t have an overarching desire to tangle with the complexity of reverb, but reading your thoughts on the subject is planting the seed. I’ve taken baby steps working with JUCE and expect that the material you refer to will keep me busy for years, especially considering my primary vocation in the maritime industry allows only a few hours a week to program and play music.

I appreciate your comment on the pile of tangled necklaces. I have hundreds of hours into a PD implementation of something like the Echoplex Digital Pro under my belt, and I can barely understand it, let alone explain it to someone else, after letting it sit for a few years. That said, being able to route sound through it and test ideas in near real time afforded me a comfortable development environment where experimentation drove the development process.

Thanks you for sharing your thoughts about this! I have been wanting to make plugins/effects for a while. Reverb has always seemed a little ‘scary’, but your post makes it more accessible.

Are you using something like JUCE yourself? Or are you using any other plugin framework?

I use Juce for all the Valhalla plugins. The DSP library is my own, and exists outside of Juce (I have a very lightweight plugin class that wraps into Juce).

Hi Sean,

I heard you on a couple of YouTube videos and podcasts, your enthusiasm for your work is incredibly contagious.

These blogs are awesome I hope you keep doing things like this. I come to them as a long time effect enthusiast that has gotten more and more interested into DSP. I have recently read both volumes of Musimathics and I am trying to learn DSP without any prior experience in programming. Your materials, books of reference and ideas on how to approach learning are super valuable. Thanks!